Designing human-AI interaction for 10,000+ enterprise support agents

A feature request to "prioritise angry customers faster" uncovered a deeper problem: agents wouldn't act on AI data they couldn't understand. This is how we designed for trust instead of speed.

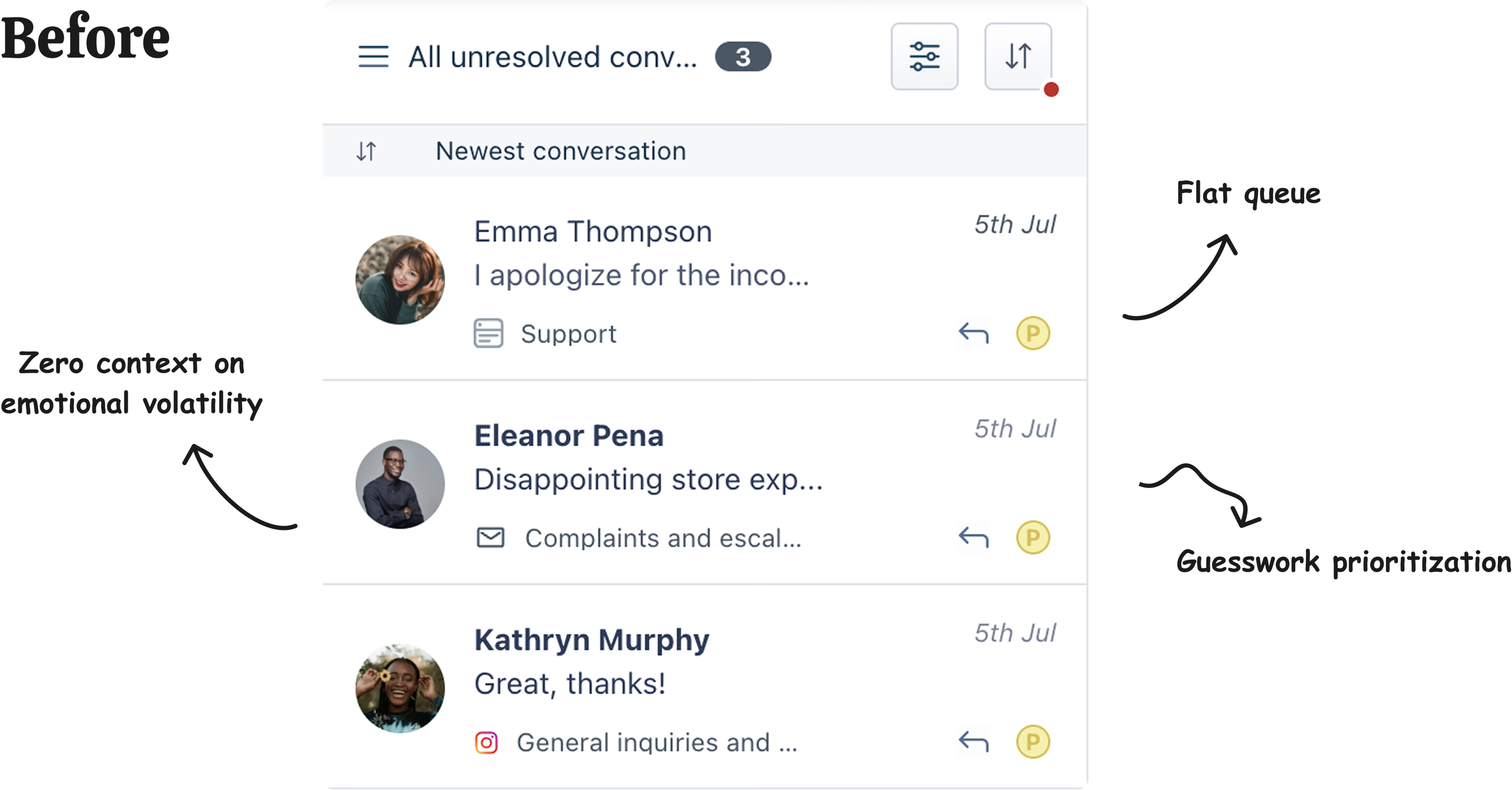

The problem beneath the problem

The brief was practical: Freshworks had an ML model that predicted customer sentiment from conversation text. Surface it to agents, the theory went, and they'd know exactly which customers to prioritise. Faster triage, happier customers.

But during discovery, something more fundamental surfaced. Agents weren't slow because they lacked signals. They were hesitant because they didn't trust signals they couldn't verify.

"I'd rather scan every conversation myself than act on something I don't understand."

— Support agent, enterprise customerThis was the real problem. Agents managing five simultaneous conversations couldn't afford to be wrong. A misread customer who escalated meant a manager call, a service failure, a broken relationship. When the cost of a mistake is high, people default to what they can control — even if it's slower.

The design challenge shifted from "how do we surface sentiment data" to "how do we make agents trust AI enough to act on it?"

Design constraint: The ML model was still being refined throughout the design phase. I had to build the human-facing layer without knowing the model's final accuracy. Rather than treating this as a blocker, I treated uncertainty as a design material — the interface needed to work even when the model was wrong.

The decision that defined the project

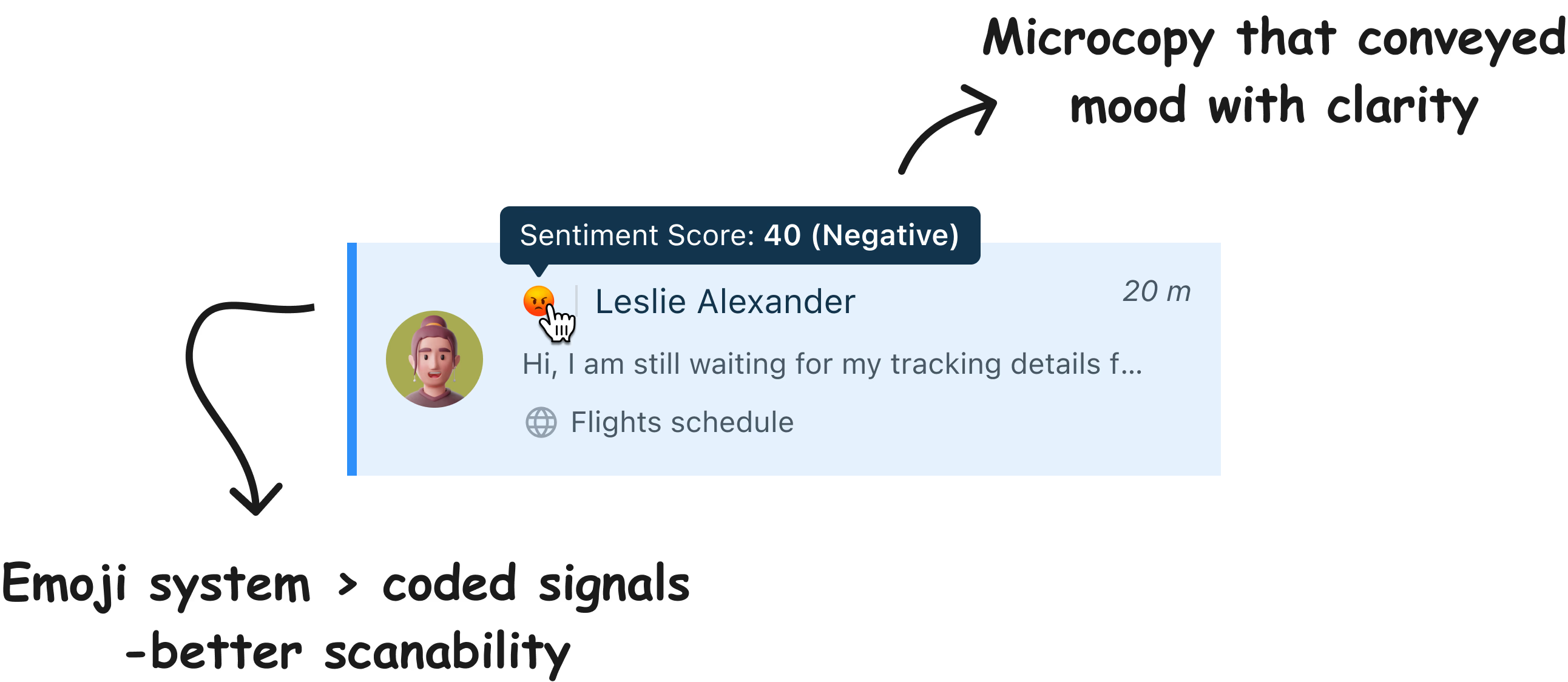

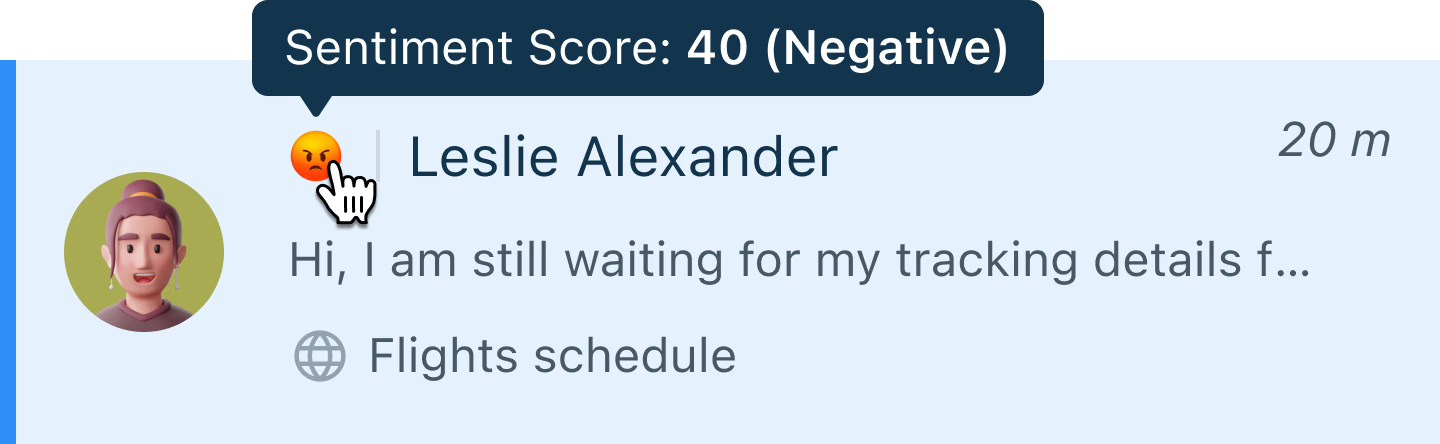

Before anything else, one question had to be answered: how do you represent customer sentiment to an agent already managing five conversations at once? The representation needed to be immediately readable — no translation, no context, no learning curve.

Four options were evaluated against a single criterion: can an agent understand this in under a second, under pressure, without training?

| Approach | Example | Why it fails (or works) |

|---|---|---|

| Raw score | 0.87 |

Requires mental translation under pressure every time. |

| Coloured cards | Colour-to-meaning mapping must be learned and recalled. | |

| Directional arrows | ↑ → ↓ | Communicates direction but not magnitude or urgency. |

| Emoji faces Selected | 😡 😟 😐 🙂 😊 | Maps to existing mental models. Zero onboarding. Universally understood. |

"Trust isn't built through sophistication. It's built through instant comprehension."

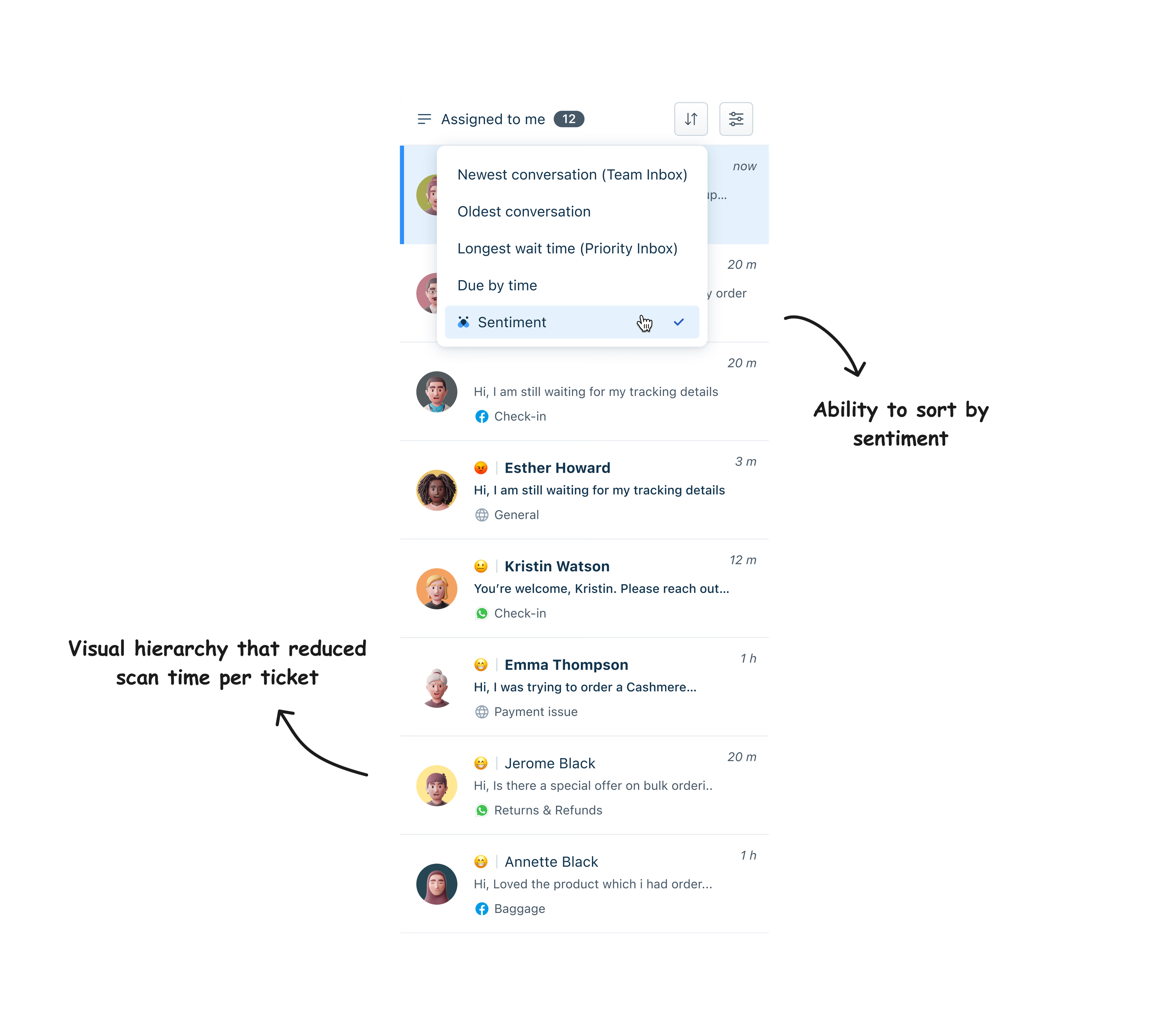

Three decisions that built trust

Choosing the right representation was necessary but not sufficient. For agents to genuinely rely on the system, trust had to be built at three levels: cognitive (can I understand it?), behavioural (do I feel in control?), and organisational (does it fit how we work?).

The emoji system required zero onboarding. Agents already knew what a distressed face meant — the product just needed to surface that signal at scale. Comprehension in under one second.

Agents could sort their queue by sentiment, override the AI's assessment when they disagreed, and flag wrong predictions. Every override fed back into model improvement — the system got smarter while agents stayed in charge.

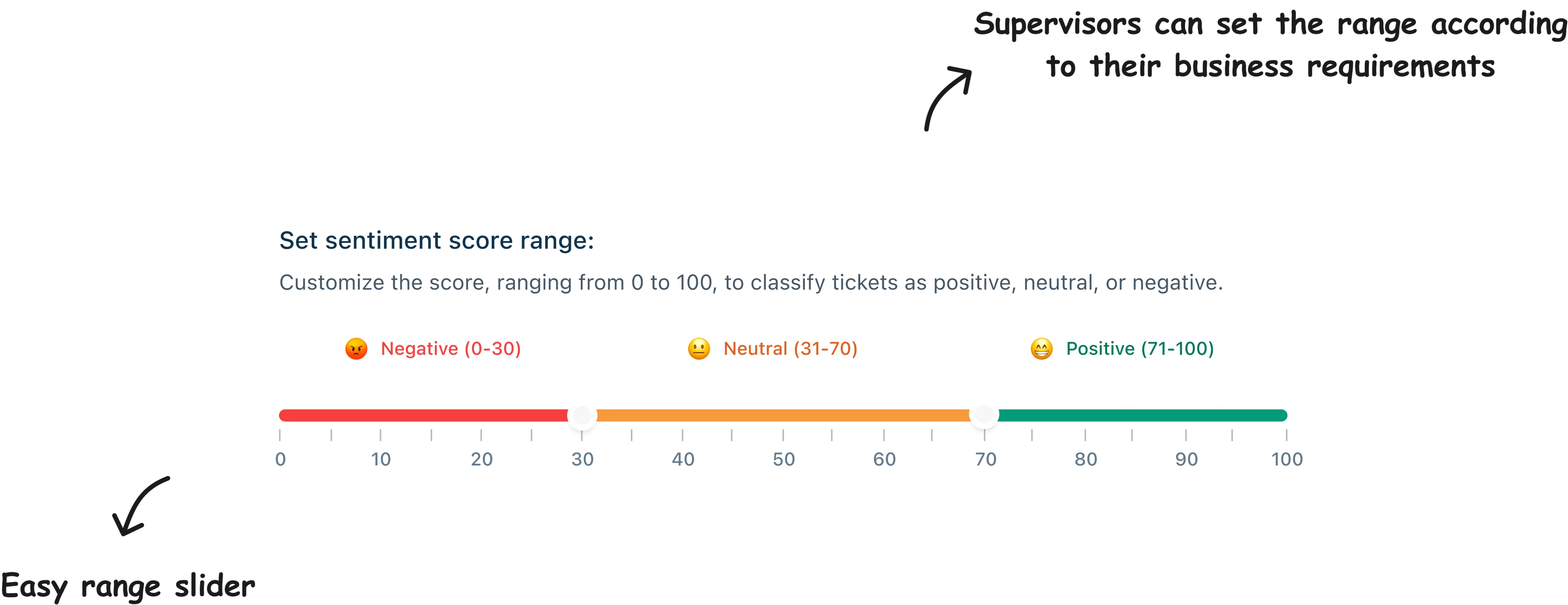

Different companies defined "urgent" differently. A fintech company had a lower escalation threshold than a retail brand. Parameters were configurable at the company level — without exposing any of that complexity to individual agents.

Agents started relying on the system in week one

The real measure of success wasn't adoption rate or CSAT. It was behavioural change. Within the first week of rollout, agents were sorting their queues by sentiment without being prompted. The tool had become part of how they worked — not a layer added on top.

"Agents stopped seeing the AI as something trying to replace their judgment — and started seeing it as something that confirmed it."

That reframing was the outcome. The AI wasn't claiming to be smarter than the agents. It was a tool that let agents apply their expertise at scale — to more customers, faster, with less cognitive load. Trust came from the system making them better at their job, not from the system being impressive.

What designing for AI actually means

AI features fail when designers treat the model as the product. The model is infrastructure. The product is the human experience of acting on what the model produces — and that experience lives entirely in the design layer.

This project reinforced something important: when you're designing for AI, accuracy is not your primary design problem. Accuracy is an engineering problem. Your design problem is what happens in the gap between "the model produces a prediction" and "a human takes an action."

Designing for AI means designing for its limits. The only question that matters is: who needs to trust this system, and what would make them stop?

In this case, trust was broken by opacity — agents couldn't see why a customer had been flagged as upset. The solution wasn't explainability dashboards or confidence intervals. It was simpler: giving agents the ability to override, so they never had to fully surrender their judgment to something they couldn't see inside.